S1-EN

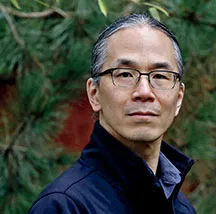

An Interview with Ted Chiang

I don't think we should create digital lifeforms.

interviewer: Dominique Chen (Information Studies Researcher)

Ted Chiang, author of the most visionary science fiction of our time, masterfully crafts futures that are not only grounded in the latest scientific and technological insights but also pose profound allegorical inquiries into the ethical quandaries surrounding human freedom, will, consciousness, life, and happiness. " Good science fiction is about imagining the non-obvious consequences.," Ted articulates. Editorial board member of DISTANCE.media, Dominique Chen (an Information Studies Researcher) probes Chiang on the current and future trajectories of AI and ALIFE development, continuing to explore these complex themes.

-

Contents

-

We don't need a new category of being that is capable of suffering.

Dominique Chen: In addition to being a long-time fan of your novels, I am a researcher in Interaction Design and focus on the relationship between humans, microbes, and computers.

Today, I'd like to ask about your creative process, language, and ethical issues in AI development. I saw your presentation at the ALIFE2023 conference, where you discussed the Education of the Digients[★01], referring to your piece “Lifecycle of Software Objects.”

I heard your criticism against the easy anthropomorphism often seen in technological landscapes, especially regarding AI and virtual characters. I found this topic fascinating, especially because I was touched by your depiction of Anna[★02]’ s attitude toward her digients.

What is the difference between Anna's attitude and the corporate manipulative anthropomorphism you criticized?

Ted Chiang: The difference is that the digients in the story actually have subjective experiences, while the chatbots we currently see do not. It's analogous to the difference between a dog and a virtual dog. If someone starves a biological dog, they are causing real suffering. If someone neglects to "feed" a virtual dog, the virtual dog experiences nothing.

Corporations may try to take advantage of humans' emotional response to an animation of a whimpering dog, but it is just an animation.

The digients in my story were capable of actual suffering. Not only physical suffering, but also emotional suffering, just as biological dogs are. It is entirely appropriate for humans to act to reduce both the physical and emotional suffering of animals, and I think it would likewise be appropriate for humans to act to reduce the suffering of digital organisms.

But we don't have anything remotely like digital lifeforms right now. All we have are animations and sound files.

DC: Right. So, the fact that Jax was capable of physical and emotional suffering enabled an authentic connection between him and Anna. Then, should we consider implementing suffering to digital organisms to evade the corporate manipulative perspective of technology?

TC: The connection Anna had with Jax[★03]was just as real as the connection a dog owner has with a dog. Perhaps a better comparison would be the connection that certain primatologists have with chimpanzees they have raised.

I don't think we should create digital lifeforms, precisely because we don't need a new category of being that is capable of suffering.

DC: I see. That is a poignant message to the IT industry.

TC: I think it is theoretically possible for us to create digital lifeforms that could experience both joy and suffering, but I think preventing suffering is a much higher priority.

Right now humans cause incredible suffering to animals, and animals are made of flesh and blood, so it is easy to see that they suffer. A digital organism would not be made of flesh and blood, and so a lot of people would dismiss their suffering. So if we created digital organisms, I think we would inevitably cause them huge amounts of suffering.

DC: In a sense, Anna and Derek's situation was a tragedy that our society should avoid.

TC: To be clear, this is an entirely hypothetical consideration. The issue we face now is that corporations will take advantage of our emotional responses to animations and sound files in order to extract money from us. As I said to Anil Seth[★04], this is also bad, but it is an entirely different kind of problem.

From a philosophical perspective, the problem of the suffering of digital organisms is interesting to me. But from a practical perspective, it is not something we will need to worry about for a very long time.

Questioning the assumption that more technology is always better.

DC: Thank you. Next, I want to connect this issue with your recent essay in The New Yorker and look at a more practical perspective.

In your article, you wrote:

“For technologists, the hardest work of all—the task that they most want to avoid—will be questioning the assumption that more technology is always better, and the belief that they can continue with business as usual and everything will simply work itself out. “

We, researchers of technology, should take your message seriously. But how do you imagine a preferable form of AI agent to a consultant?

TC: In the New Yorker article you're quoting, my focus is on how the broad category of technologies that we call AI empowers capital at the expense of labor.

The question I'm interested in is, how can we use these technologies to empower labor? How could we use them to improve the lives of people who work? I don't have a good answer to this.

DC: Labor is a different situation than people nurturing digients.

TC: Very different. There are ways to use technology to disempower people as workers, and there are ways to use technology to extract more value from people as consumers. The latter is the category that virtual pets or romantic chatbots fall under.

DC: This leads me to think that Blue Gamma's initial decision to do business with digients was a mistake after all, if that ever happens in reality. Very interesting.

Related to that, how do you see the role of, for instance, ChatGPT in people's workplace?

TC: Right now, ChatGPT isn't reliable enough to replace humans at almost anything. Every day there's another headline about how ChatGPT bungled some task.

We like the idea of technological solutions, because they promise immediate results.

DC: Is it possible for our society to develop technology that truly empowers people as workers? What do you think is needed to tackle this question?

TC: This is the central question.

Evgeny Morozov coined the phrase "techno-solutionism"[★05] to describe the tendency to view everything as a problem that can be solved with technology.

It's obvious why companies embrace this tendency, because a technological solution is a product that they can sell.

But what about problems whose solution is primarily political in nature?

For example, is there a technology that would help workers unionize? And even if such a technology exists, is that a product anyone can sell profitably?

DC: Right. That's a needed perspective that is also possible to deploy into our society. The issue of techno-solutionism is embedded not only in business corporations but also in us, the so-called customers.

This topic reminds me of your mentioning people's desire (for digital lifeforms or perfect language) in your talk with Anil Seth.

TC: Yes, we as consumers like the idea of technological solutions, because they promise immediate results.

DC: I agree. I believe reading science fictional narratives such as "Lifecycle of Software Objects" helps us get past solutionism because they entangle us in dilemmas that you cannot solve with technology.

TC: And social problems can't be solved by individuals acting in isolation. Buying a virtual girlfriend is something that a lonely guy can do by himself. Restructuring our society to reduce loneliness is not.

Good science fiction is about imagining the non-obvious consequences.

DC: I wanted to know how you use two types of writing, science fiction and critical essays.

Because you make social satire in your fiction as well[★06], and your essays are as powerful as your stories. How do you distinguish your critical essays from your novels? Are they interrelated?

TC: Writing essays is something I've only begun doing recently, and I'm still trying to figure out my relationship to it.

DC: I see. I was imagining you would approach some of the topics covered in your essay to construct new novels.

TC: For me, the motivation to write fiction feels very different from the motivation to write essays. In my fiction, I'm mostly trying to tell a story, and hopefully evoke an emotional response.

DC: What do you expect as a response to your essays?

TC: I didn't have any specific expectations. It's been a surprise to me that they have attracted as much attention as they have.

DC: What kind of response surprised you?

TC: I'm dismayed by the things that Silicon Valley does, but I think there are plenty of people doing a better job of pointing out Silicon Valley's shortcomings than me.

But people have been wanting to interview me about the essays, which is not something I anticipated.

DC: I suspect that is because the impact of the points you make as a science fiction writer quite differs from that of an engineer or researcher.

What do you think about the use of Science Fiction in academia? As a teacher, I have used SciFi prototyping and assigning my students to write very short dystopian SciFi stories to cultivate critical perspective.

Do you expect anything from scholars and students for their reading and writing of science fiction?

TC: I think good science fiction is about imagining the non-obvious consequences. That's a useful skill in a lot of contexts.

Theodore Sturgeon said science fiction should ask the next question. It's easy to ask the first question, but figuring out the next one is hard.

DC: I can only agree. It is the skill to question the non-obviousness nurtured in reading and writing fiction in general, and that's definitely what scholars need to conduct critical research.

Can I ask you a last question?

TC: Sure.

DC: At ALIFE2023 in Sapporo, you talked about Umberto Eco’s concept of Perfect Language, and you pointed out that there is a deeply rooted urge for that kind of idea, but you think that is an unattainable dream. This conversation reminded me of the Heptapod B in Story of Your Life, the written language used by the heptapods.

Considering natural language as one of the foundational artificial communication technologies, can we come up with an idea to evolve our language to make better kinship? (this is also linked with your aforementioned idea of unionizing technology)

TC: Languages aren't static; they grow. Every language has potentially infinite expressive power, so we don't need a new language to improve our relationships with each other. Over time, our culture will generate new ideas and formulate ways of expressing those with existing language. Whatever we need to do, we can do with the languages we have.

DC: Thank you. Language growth is not compressible, as life experience is incompressible, as you said in your talk at ALIFE2023.

It's about time we should end this interview. Thank you so much for your time and generosity in accepting this interview! I've learned so much from your answers. We truly appreciate it.

TC: Thanks. Have a good afternoon!

DC: Thank you, and have a good night!

(September 14, 2023, 21:00-22:30 Seattle / September 15, 2023, 13:00-14:30 Tokyo)

★01 A virtual life form that appears in the work. It can learn language and behavior, and also manifests curiosity and emotional expressions.

★02 Anna is the main character of “Lifecycle of Software Objects” who struggles to realize a fair living environment for the digients she has raised.

★03 Jax, a digient nurtured by Anna, is one of the main protagonist in “Lifecycle of Software Objects.”

★04 refers to a related talk conducted with computational neuroscientist Anil Seth at ALIFE2023.

★05 Morozov, E., 2013. To Save Everything, Click Here: the Folly of Technological Solutionism (Public Affairs).

★06 see for instance Instant Rapport, Binary Desire and Sophonce depicted in “Lifecycle of Software Objects”

[Interview Postscript]

Dominique Chen

For me, Ted Chiang's "Story of Your Life" (1998) holds a special significance. The actions of the protagonist, Louise Banks, in the story made me deeply contemplate my own views on life and death and my relationship with my own child. Furthermore, Louise, a linguist, interacting with the alien Heptapods and how it transforms her perception of time, raised the enduring question of whether there is inherently a linguistic relativity aspect to communication.

In this interview with Ted, we discussed his short story "The Lifecycle of Software Objects" (2010), included in the Japanese paperback edition of Exhalation (Hayakawa Shobo), published in August 2023. We also delved into his essay "Will A.I. Become the New McKinsey?" published in The New Yorker in May 2023, as well as his talk and discussion with computational neuroscientist Anil Seth, held at ALIFE (Artificial Life International Conference) 2023 in Sapporo in July 2023. We explored Ted's thoughts on the current state of artificial intelligence.

I've always been fascinated by the tension between the logical structure supporting Ted Chiang's works and his careful authorial perspective that empathizes with the characters. In this regard, what impressed me in this interview was Ted's ability to question the current state of technological development while clearly delineating the boundaries with his own science fiction narratives. As someone engaged in research involving AI myself, I often find myself reflecting on his attitude.

In artificial life research, the pursuit of autonomous life models is a major theme. This differs significantly from recent engineering-focused AI development, which aims to behave plausibly. While ChatGPT may represent the pinnacle of AI models that perform plausible intellectual actions automatically, ALife seeks to explore the operation of autonomous life that can transcend human control. Takashi Ikegami pointed out in his essay published in DISTANCE that large-scale language models like ChatGPT lack "desire," but I have long believed that experiencing suffering may be necessary to possess authentic (not just simulated) desires, needs, and intentions.

However, as Ted succinctly answered in this interview, there is an ethical dilemma in artificially creating beings capable of experiencing suffering. We must avoid creating new dominations and subjugations for the sake of human-centric pleasures or curiosity. I believe this is a pitfall that pragmatic or constructivist technologists like myself should avoid. In this sense, Ted's reference to Evgeny Morozov's criticism of Techno-solutionism in the interview, which is also mentioned in recent HCI research, gives me a glimmer of hope for various discussions regarding technologies that decenter human beings in the constellation with the multitudes of nonhuman and more-than-human beings.

Lastly, Ted's response regarding language evolution was surprisingly grounded, given that he speculatively designed the fictional language Heptapod B, which has inspired many. He mentioned at ALIFE2023 that experiences of interaction with intelligent beings cannot be compressed, and this idea once again made me rethink the overall tendency toward technological acceleration.

This interview was conducted using Discord's chat feature, not a video conferencing system. The idea for a chat interview was based on a previous experience when I interviewed Keiichiro Shibuya, who proposed this format. I believe it was an unfamiliar format for Ted as well, but despite that, he took several minutes to carefully consider and sincerely respond to each of my questions. Furthermore, he graciously exceeded the initially planned 60 minutes and answered additional questions. I would like to express my gratitude and respect for this once again.

- Ted Chiang

- Science fiction writer. Born in 1967 in Port Jefferson, New York. Majored in computer science at Brown University. Debuted in 1990 with “Tower of Babylon,” which won the Nebula Award. Subsequent works received high acclaim, won the Hugo Award four times for “Hell is the Absence of God,” “The Merchant and the Alchemist’s Gate,” “Exhalation,” and “The Life Cycle of Software Objects.” His novel “The Story of Your Life,” was adapted into a film in 2016 by Denis Villeneuve (titled “Arrival”), spreading the author’s name across genres and countries worldwide. Ted Chiang depicts a future that incorporates the latest findings of science and technology, while continuing to question, through allegorical tales, the ethical conflicts surrounding the freedom, will, awareness, life, and happiness of the people who will live there.

- Dominique Chen

- Information Studies Researcher. Born in 1981. Ph.D (Information Studies). Professor at Waseda University, School of Culture, Media and Society since 2017. Former researcher at NTT ICC (InterCommunication Center) and co-founder of Dividual Inc., an IT startup in Tokyo. Now leads Ferment Media Research, exploring the relationships between humans, technology, and natural beings. Author of Words to Create the Future (Shinchosha, 2020), Creating Wellbeing: A Design Guide to Connecting 'Me' and 'Us' (BNN, 2023) and Cybernetic Religion (NTT Publishing, 2015). Editorial board member of DISTANCE.media.